I am the head of data at Reforge (we’re hiring!) and data is critical to our success. We use it to track our business, individual teams leverage it in their work, and we believe in empowering people in the company with data to help them do great work. We use all sorts of great tools, but as the head of data I felt it was important to understand whether people were actually using our BI (business intelligence) tool.

I built a weekly report that lays out how my company is using our BI tool, Metabase. It has the following reports:

- Dashboards created in the past 7 days

- Questions created in the past 7 days

- Individual Dashboard views by our exec team in the past 7 days

- Dashboard view counts by user in the past 7 days

- Activity by my boss (our CTO)

- Metabase MAUs

- Metabase WAUs

It helps me to understand at a high level what is happening inside the company from a data perspective:

- Who is building reports (questions / dashboards)

- What questions people are asking of our data

- What is most important to our exec team

- Who is looking at information on a regular basis

- What is top of mind for my boss

- How engaged our company is with our data & analytics

It was surprisingly easy to set this up. All we had to do was add the Metabase internal database as a source to Metabase (sounds confusing, but actually quite simple). It wouldn’t shock me if this became a paid feature long-term for Metabase on their enterprise plan, but it took less than 30 minutes to figure out their data model and build some quick and dirty SQL questions that produced answers to our most important questions. Metabase is already storing all of the data we needed to visualize to get answers to our questions.

I set this up as a dashboard subscription so it is delivered to my email every week and I can quickly scan it to understand what’s top of mind for the org.

Reforge is Data Driven

Since the beginning of the company we’ve looked at data to understand how well we’re tracking against key goals for the company (revenue, applications, content completion, weekly activity, etc). These stats span across the entire company in terms of usefulness (support, sales, marketing, product, engineering, design, finance, etc) and we strive to make as much information accessible to everyone so that they can be data informed.

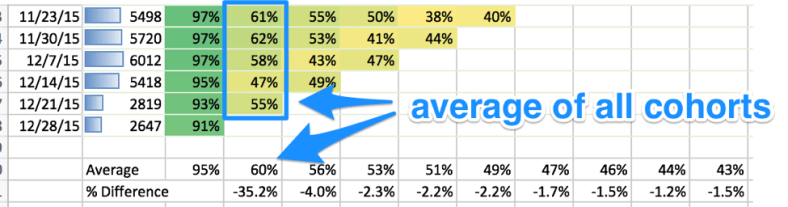

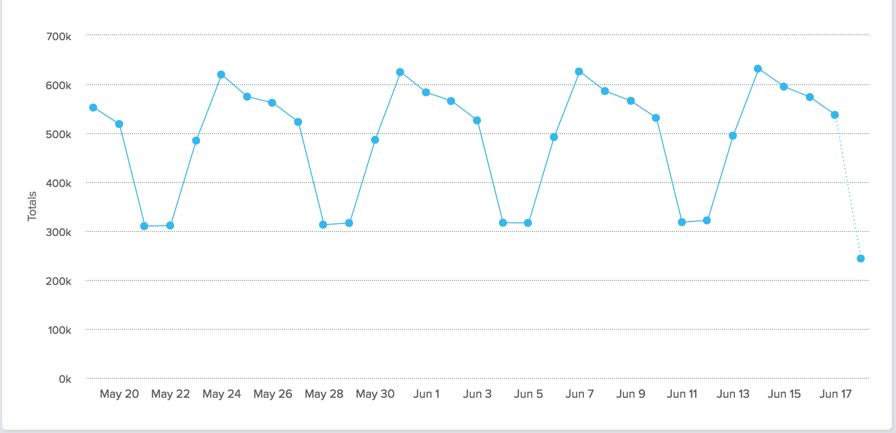

If you’re considering joining a company – do you want to be at one that uses data to help inform decisions? You can see below that Reforge employees are using data on a regular basis and you can see how it scales as we’ve grown the team and hit key milestones. We’ve typically seen 75-100% of employees leveraging Metabase on a monthly basis.

The queries that we use to power the charts:

Dashboards created in the past week

select created_at, name, u.email, left(description, 40) as description, 'https://BI_HOSTNAME/dashboard/' || d.id as url

from report_dashboard d

inner join core_user u

on d.creator_id = u.id

where created_at > current_date - interval '7 days'

order by 1 descQuestions created in the past week

select created_at, name, u.email, 'https://BI_HOSTNAME/question/' || d.id as url

from report_card d

inner join core_user u

on d.creator_id = u.id

where created_at > current_date - interval '7 days'

order by 1 descExec team dashboard views in the past week

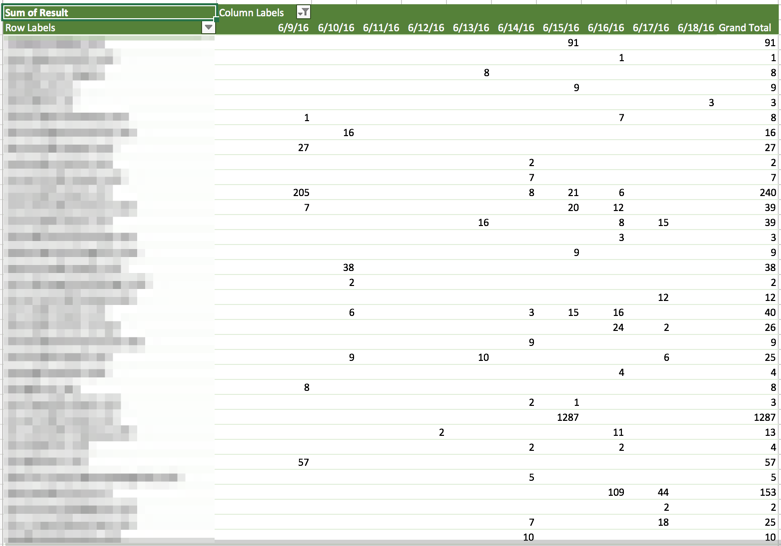

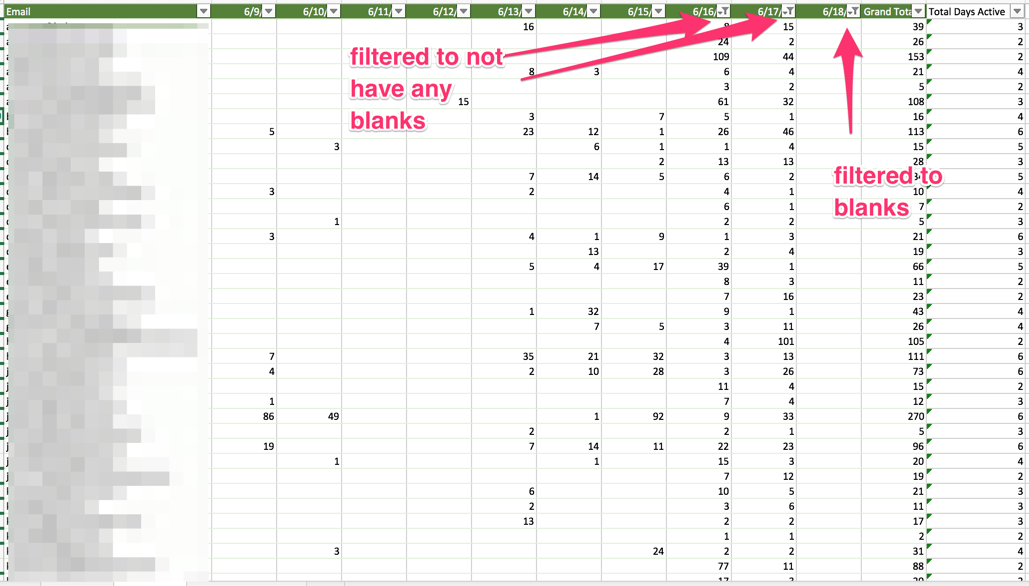

For this report, I wanted to know what the exec team was looking at in the past week. I want to know how much they’re looking at the metrics and whether they’re looking at the dashboards I think they should be looking at.

select u.email, d.name, count(v.id)

from view_log v

inner join core_user u

on v.user_id = u.id

inner join report_dashboard d

on v.model_id = d.id

where model = 'dashboard'

and u.email in

(LIST_OF_EMAILS)

and v.timestamp > (current_date - interval '7 days')

group by 1, 2

order by 3 desc

limit 200Dashboard views leaderboard (views in the past week)

This shows the number of views by user in the past 7 days. It’s always a good sign when your head of data isn’t the most prolific viewer of dashboards.

select u.email, count(v.id)

from view_log v

inner join core_user u

on v.user_id = u.id

inner join report_dashboard d

on v.model_id = d.id

where model = 'dashboard'

and v.timestamp > (current_date - interval '7 days')

group by 1

order by 2 desc

limit 200Most popular dashboards by views

select d.name, count(v.id)

from view_log v

inner join core_user u

on v.user_id = u.id

inner join report_dashboard d

on v.model_id = d.id

where model = 'dashboard'

and v.timestamp > (current_date - interval '7 days')

group by 1

order by 2 desc

limit 200Questions created by a single person

select created_at, name, u.email, 'https://BI_URL/question/' || d.id as url

from report_card d

inner join core_user u

on d.creator_id = u.id

where created_at > current_date - interval '28 days'

and u.email = EMAIL_OF_YOUR_BOSS

order by 1 descMetabase MAUs

select date_trunc('month', timestamp), count(distinct user_id)

from view_log

group by 1

order by 1Metabase WAUs

select date_trunc('week', timestamp), count(distinct user_id)

from view_log

group by 1

order by 1

Recent Comments